TL;DR

We run a full RL pipeline on the DeepRobotics LYNX M20 wheel-legged quadruped: train a MoE Teacher-Student policy in IsaacLab, export to ONNX, validate with a custom C++ inference pipeline in MuJoCo (chasing down four non-trivial bugs along the way — an interleaved history buffer, a wrong LiDAR-scan geometry, a missing terrain match, and a cold-start segfault), and then rebuild the cross-host communication so a 1.3 MB-per-frame point cloud goes from a 1 Hz collapse to a stable 10 Hz, finally enabling elevation mapping on the remote workstation. This post walks through the whole chain.

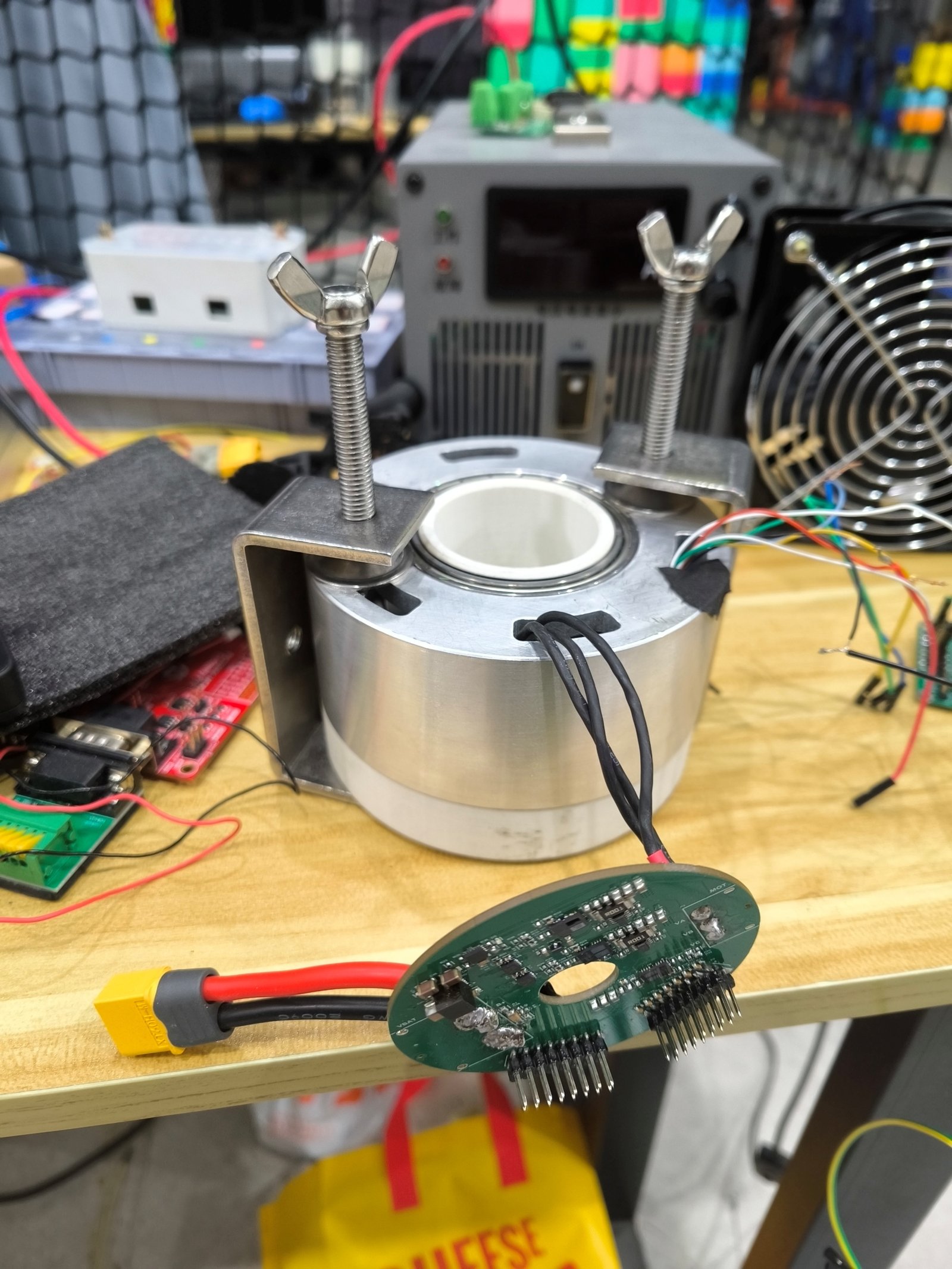

Fig. 1 — M20 on the lab obstacle course.

1. Platform and Goal

Robot: DeepRobotics LYNX M20, a wheel-legged hybrid — each leg ends in a driven wheel. Faster than a pure quadruped on flats, more capable than a pure wheeled platform over steps and gaps. Twelve leg-joint DoFs plus four wheel-velocity channels.

Perception: a 96-line hemispherical LiDAR mounted on top:

| Spec | Value |

|---|---|

| Lines | 96 |

| Horizontal FoV | 0–360° |

| Vertical FoV | 0–90° |

| Range | 60 m (30 m @ 10 % NIST) |

| Point rate | 856 K pts/s (single echo) / 1.71 M (dual) |

| Eye safety | Class 1 |

Fig. 2 — LYNX M20 front view.

Goal: have M20 traverse irregular terrain (steps / gaps / platforms / slopes / scattered crates) under a single unified policy that does velocity tracking and posture maintenance, replacing the hand-coded gait state machines.

2. Training: MoE Teacher-Student

2.1 Framework

We forked DeepRobotics’ open-source rl_training on IsaacLab 2.3 / IsaacSim 5.1 and registered a family of M20 tasks:

| Task ID | Purpose |

|---|---|

Flat-Deeprobotics-M20-v0 |

Baseline, flat-ground velocity tracking |

Rough-Deeprobotics-M20-v0 |

Rough terrain |

Rough-MoE-Teacher-Deeprobotics-M20-v0 |

Multi-expert teacher |

Rough-EleMoE-Teacher-... |

MoE with elevation perception |

teacher_{acrobatic, gap, platform, rail, scan, elevation, ...} |

Specialized skill teachers |

2.2 Actor Architecture

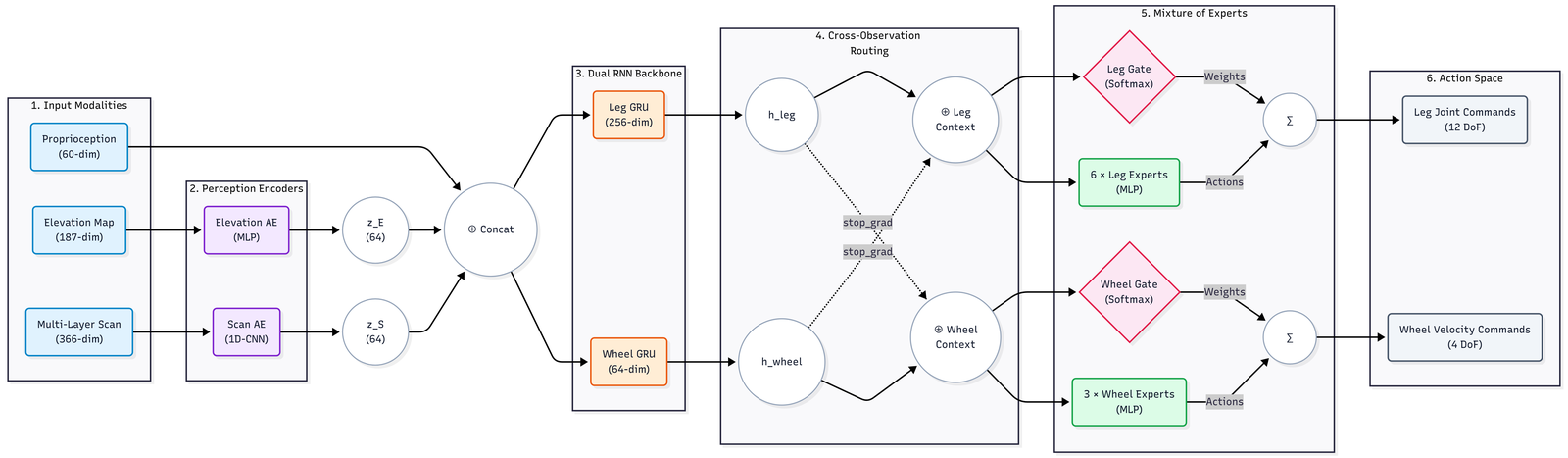

An Estimator + Dual-RNN backbone + Mixture of Experts Actor:

Fig. 3 — MoE LSTM Actor overview.

Three input streams:

- Proprioception (60-dim) — base 6-DoF state + 12 joint velocities + command + last action

- Elevation map (187-dim) — compressed via an MLP autoencoder to 64-dim latent $z_E$

- Multi-layer scan (366-dim) — compressed via a 1D-CNN AE to 64-dim latent $z_S$

Concatenated and fed into the dual-RNN backbone:

Leg GRU (256-dim)for the legsWheel GRU (64-dim)for the wheels

Cross-Observation Routing lets each context peek at the other while a stop_grad cuts the back-prop coupling, so leg / wheel temporal states stay independent during updates.

Then the MoE:

- Leg MoE: 6 leg experts + Softmax gate → 12-D joint commands

- Wheel MoE: 3 wheel experts + Softmax gate → 4-D wheel velocity

2.3 LiDAR Detail

The Teacher sees ground-truth ray hits; the Student (the policy that ships) gets a small “blind-zone hallucination”:

# rl_training/.../moe_teacher_env_cfg.py

def process_lidar_data(depths, is_student):

# 1) tanh-normalize to [0, 1)

normalized = torch.tanh(depths / 10.0)

# 2) For points closer than 0.3 m, fill with a "5 m far" tanh value

# so the student doesn't read missing returns as "wall in face".

if is_student:

blind_fill = torch.tanh(torch.tensor(5.0 / 10.0))

normalized = torch.where(depths < 0.3, blind_fill, normalized)

return normalized

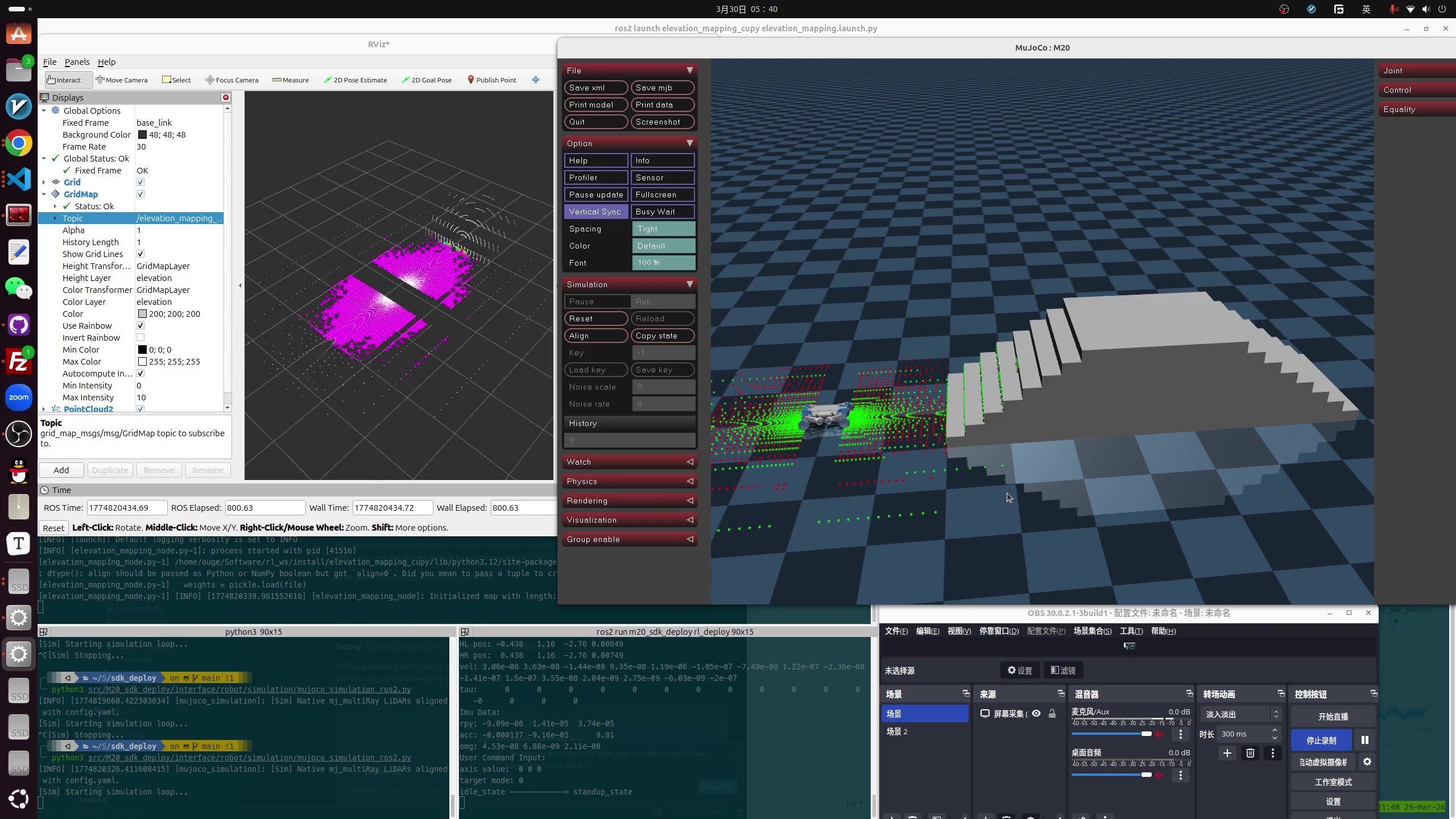

3. Sim2Sim: Drop the Policy into MuJoCo

Training happens in IsaacLab, but the deployment-side simulator is MuJoCo. A custom C++ inference pipeline wires the ONNX policy directly into MuJoCo:

Fig. 4 — IsaacLab training terrains re-created in MuJoCo (pyramid steps, hurdles, platforms, scattered crates, continuous slopes …).

Video 1 — The same policy traversing four representative terrain types in MuJoCo (pyramid stairs, gap, floating ring, continuous slope).

State extraction Obs assembly

Base ω, gravity, cmd → Proprio [57]

Joint pos / vel History [855] ──▶ ONNX (Unified MoE) Engine

Elevation [187] ──▶ Estimator (GRU)

Lidar Scan [252]

↓

Action scale + offset → joint targets / wheel cmd

Four non-trivial bugs surfaced during bring-up:

3.1 History buffer: per-frame merging trashed the RNN

The original C++ stitched [t-14, ..., t] of the multi-stream observations together frame by frame, but the RNN expects features grouped by feature type. The interleaved layout slid every feature off by one slot, the GRU hidden state slowly drifted, and the action diverged.

Fix: De-interleave — pack each obs channel into its own contiguous block, then concatenate.

3.2 LiDAR scan geometry was 2D

Initially we approximated the scan with 2D parallel height slices for speed, while training used real pitched rays — input distribution didn’t match.

Fix: re-implement using real pitch angles and strict 3D Euclidean distance, exactly mirroring IsaacLab’s ray caster.

3.3 MuJoCo terrain generator rebuilt

Re-generated all nine training-side terrain types in MuJoCo so the Sim2Sim domain stays consistent:

Pyramid stairs (up / down) · Gap · Hurdle · Floating ring (no collision) · Platform · Scattered crates · Random rough · Continuous slope (up / down)

3.4 FSM switch and cold-start protection — the segfault story

Hot-switching into the RL policy occasionally produced violent twitches or even Segfault -11. Four root causes, all fixed:

| Symptom | Fix |

|---|---|

last_action zero-init → huge PD error at the switch instant, robot kicks the floor |

Inverse-solve last_action from the actual joint positions at switch time |

| First 15 history frames zero-filled → “ghost velocity” | Zero out angular & joint velocities when seeding the buffer |

| Action jump on switch | Apply a 30-step (0.6 s) soft transition, and during the blend overwrite last_action with the post-intervention command actually sent to the joints, so latent error doesn’t accumulate |

memset over a struct containing Eigen dynamic objects → corrupt internal pointers → segfault |

Drop those memsets, use explicit constructors |

4. Sim2Real: Communication Redesign

4.1 Three-host topology

🤖 103 perception host 💻 remote desktop

/LIDAR/POINTS ┌──────────────────────┐

LIO Odometry (/LIO_ODOM) │ C++ Receiver │

│ │ FastDDS SHM (2 MB) │

│ LAN │ Elevation Mapping │

▼ │ (Cupy / CUDA) │

🔀 106 forwarder ─── WiFi ─────────────────────▶ └──────────────────────┘

forwarder.py (socat)

4.2 Why it crashed

Issue 1: cross-segment DDS packet loss. A 1.3 MB / frame point cloud over WiFi UDP gets fragmented into dozens of pieces; lose any one and the whole frame is dropped. Effective rate collapsed to 1 Hz. Trying to bump the transport size in fastdds_profile.xml hit hard internal limits and DDS refused to start.

Issue 2: LIO never produced odometry. The lidar is physically at X = 0.32 m on the 103 chassis, but extrinsic_B_L defaulted to 0. The contradiction made LIO refuse to initialize — /ACCUMULATED_POINTS_MAP kept ticking but /LIO_ODOM was empty, so the remote side had no odom → base_link and no basis for elevation mapping.

4.3 Three-end TCP dual-tunnel transport

Dodge the DDS multicast / QoS retransmit storm:

- Source proxy on 103 subscribes to the local DDS and serializes messages to raw bytes

- Decoupled channels: forwarder 106 runs two TCP tunnels —

9998(raw cloud) +9996(accumulated map) — both with TCP_NODELAY, killing Nagle - C++ strong sync: the remote desktop runs two parallel receiver threads; after de-serialization, packets are stamped with

this->now()to absorb the host clock skew - Local SHM zero-copy: a custom Jazzy XML enables a 2 MB shared-memory transport, so on the desktop the receiver hands data to the elevation mapper without copies

Cross-network rate is back to a stable 10 Hz — cleaner than the original DDS path.

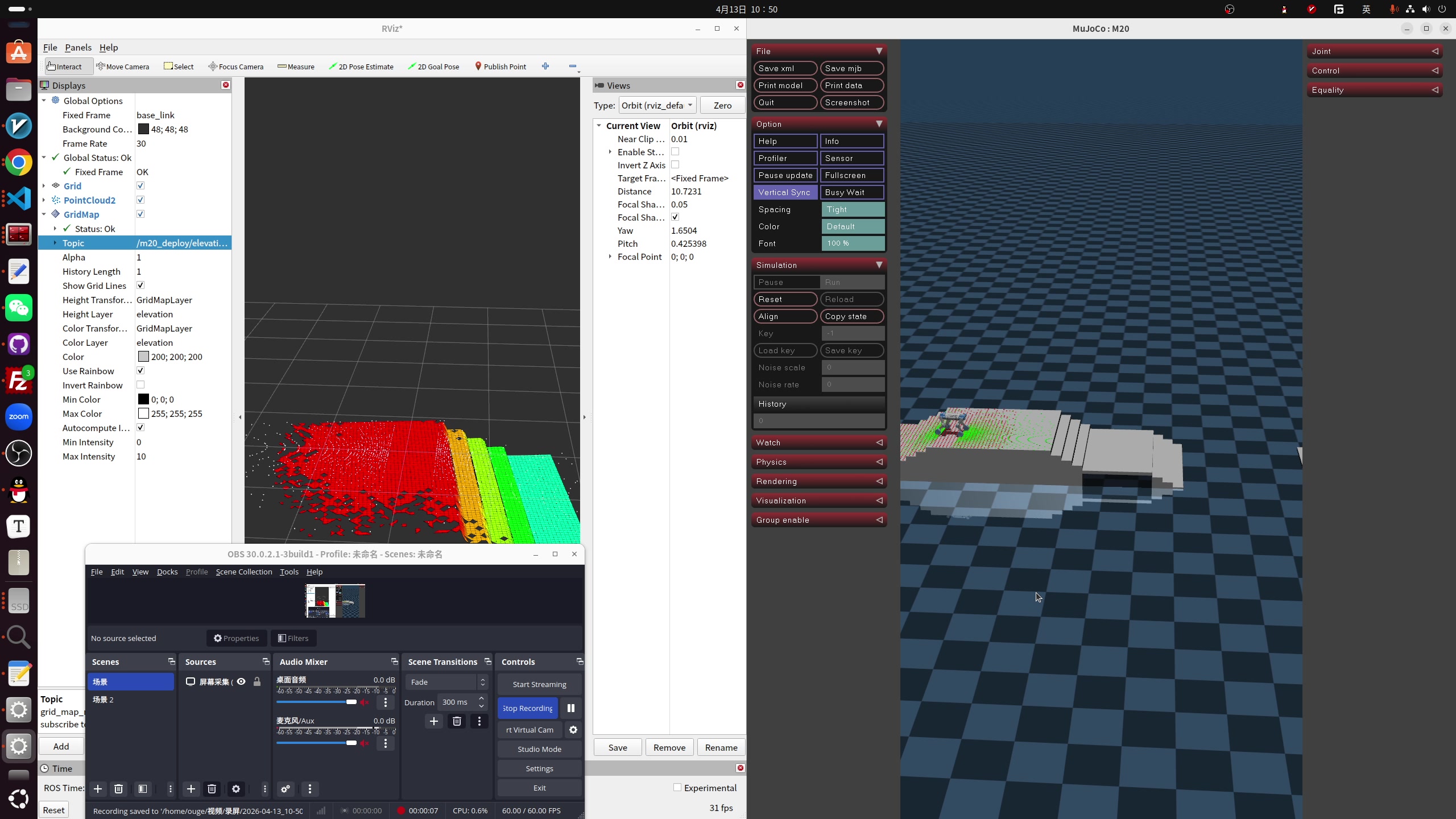

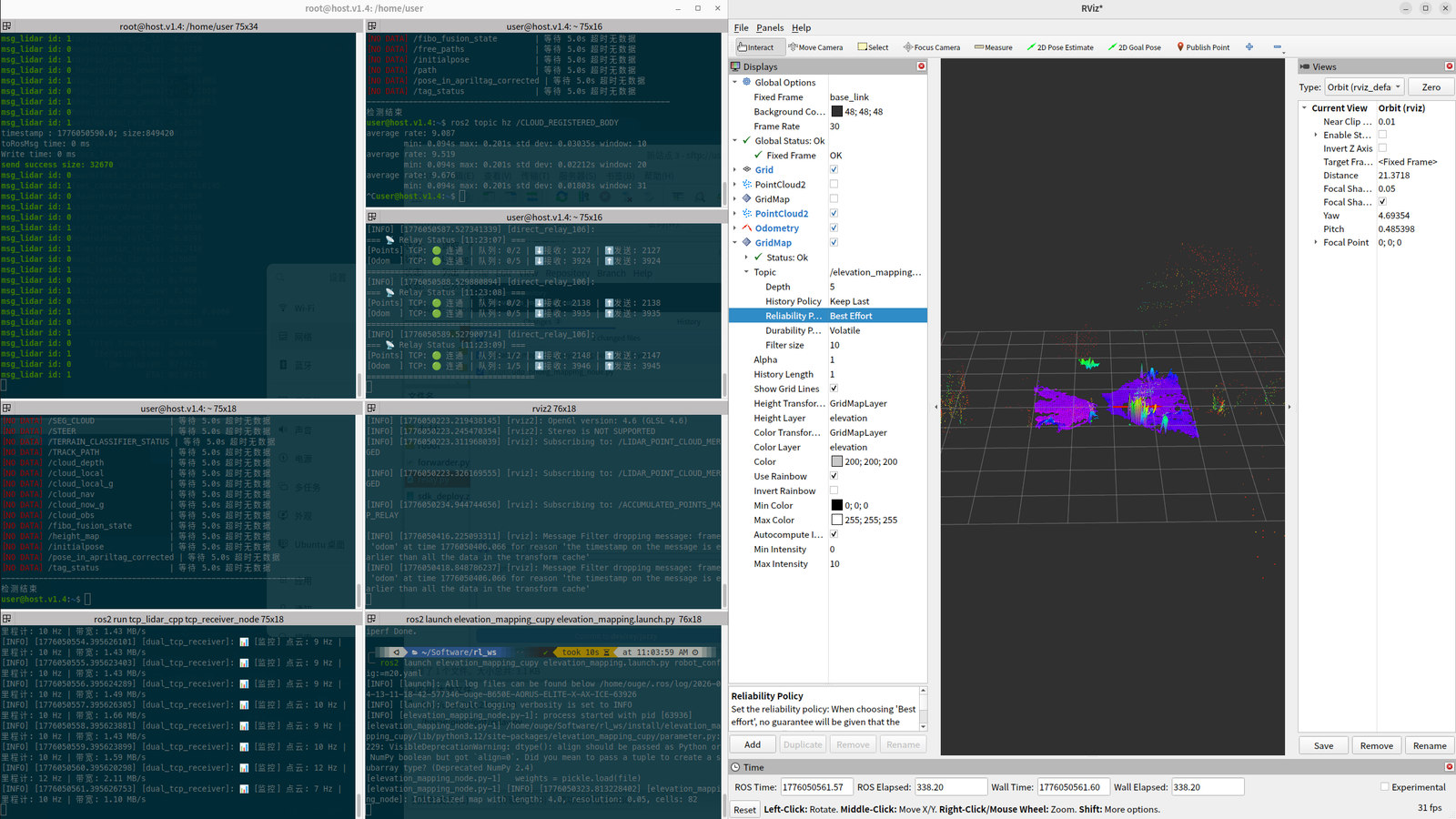

5. Elevation Mapping: TF and Frame Alignment

With the link up, the Cupy node started rendering elevation_map_raw, but two more issues surfaced:

Fig. 5 — Elevation Mapping mesh in RViz.

5.1 No TF tree from the robot

The robot side published no TF at all, so the elevation mapper couldn’t find lidar_link → odom and complained constantly. Fix: at the C++ receiver, ignore the source frame, force-build odom → base_link, and rewrite the cloud’s frame_id to base_link.

5.2 The 40-second gap

Forwarder 106 and the desktop disagreed on system time by 40.3 s, so the elevation node tagged the cloud as “stale data from the past dynasty” and dropped it. Fix: discard the original timestamps from 106 and stamp both clouds and odom with this->now() on the desktop.

Fig. 6 — Live debugging: terminals on the left, RViz with odom + cloud on the right.

Video 2 — After the transport rebuild and timestamp rewrite, Elevation Mapping renders the grid steadily at 10 Hz in RViz.

5.3 The one still open: map rotation tracking

In RViz the cloud now rotates with the robot, but the elevation grid’s X / Y axes stay locked to global north — the map gets “left behind” when the robot turns. Setting robot_pose_topic and track_point_cloud_with_robot_rotation: true hasn’t fixed it. Top of the next-step queue.

6. Real-Robot Vignettes

Fig. 7 — 40 cm-clearance crawl test (clip from the 4.27 lab meeting).

Video 3 — 40 cm-clearance crawl on the real robot.

Video 4 — Multi-gait + multi-terrain composite test (4.27 lab-meeting full-test reel).

Tests on real hardware so far:

- Multi-gait switching (trot / pace / pronk / stand) — no more twitches at handover

- 40 cm crawl — wheel-legged form factor through low overhead

- Climbing real stairs — blind walk + scan blending with elevation map

- Full MuJoCo 4-terrain pass — pyramid stairs, gaps, floating rings, continuous slopes

7. Status & Next Steps

| Item | Status |

|---|---|

| MoE Teacher training + Student distillation | ✅ |

| Estimator + Dual-RNN + LiDAR AE | ✅ |

| C++ inference pipeline + ONNX deployment | ✅ |

| MuJoCo Sim2Sim across 4 terrain types | ✅ |

| FSM switch / cold-start protection | ✅ |

| Cross-network TCP dual tunnels (stable 10 Hz) | ✅ |

| Elevation Mapping pipeline | ✅ |

| Elevation map rotation tracking | 🟡 in progress |

| Multi-gait real-robot test | ✅ |

| Stairs / gaps on the real robot | ✅ partial |

| Unify with the ArcDog framework (extension legs) | 🟡 in progress |

Next: solve the rotation tracking; bridge LiDAR / RGB into a ROS 2 navigation stack so a joystick command can drive the dog autonomously to a target; converge the policy serialization (.pt ↔ .onnx, ONNX runtime is ~2× faster than PyTorch at inference in our benchmark) for a unified Sim2Sim → Sim2Real path.